When I decided to rebuild my personal website, I had a simple goal: I wanted a system where I could just write. No complex databases, no heavy CMS to maintain - just simple Markdown files that I could commit to Git, and magically, the site would update. The simplest CMS possible.

After looking at a few options, I settled on Hugo. It’s fast, flexible, and works beautifully with Markdown. It was exactly what I was looking for, and for the most part, it has been working great.

And in my theme of keeping things simple, I wanted to take advantage of simple yet powerful (and amazing) S3! Amazon has put in tremendous amount of work to make S3 a “Simple” storage solution. The humble storage bucket is actually a powerhouse capable of serving an entire website to the world. There’s a certain beauty in its simplicity; you just point it to your files, enable static website hosting, and suddenly you have a globally available, highly resilient web server without the overhead of managing a single instance. It’s the perfect, elegant backbone for a site like mine, turning the complex task of web hosting into something that feels almost effortless.

The Deployment Pipeline

To achieve the “magic” part of my requirements, I built a deployment pipeline using GitLab CI. The idea was straightforward: every git push should trigger a build of the Hugo site, deploy the static files to an AWS S3 bucket, and invalidate the CloudFront cache so the new content appears immediately.

It felt empowering to have a fully automated pipeline. I could focus entirely on content and code, knowing that the infrastructure would handle the rest.

The “Oops” Moment

However, automation is a double-edged sword. As I was setting up the .gitlab-ci.yml file, I leveraged AI to help with generation of the deployment script. It gave me a clean, efficient-looking .gitlab-ci.yml to sync my files to S3. I glanced through the file and everything looked right.

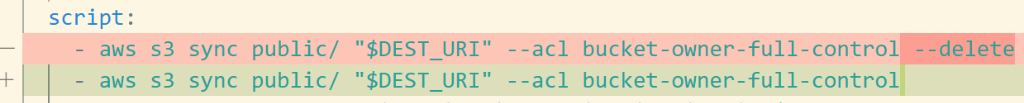

What I didn’t realize at the time was that the generated command included the --delete modifier for aws s3 sync.

For those unfamiliar, this flag tells aws cli to delete any files in the destination bucket that don’t exist in the source directory. Since my local build environment (and the CI runner) didn’t have the accumulated history of experiments and guides I’d created over the last six months, the sync command did exactly what it was told: it made the remote bucket match my fresh build.

In seconds, six months of guides and experiments vanished.

The Recovery

This could have been a disaster. But thankfully, I had adhered to one of the core principles of DevOps: everything as code.

Although I had lost the files on S3, all those assets were themselves generated and deployed via their own GitLab pipelines. Recovery was as simple as finding the last successful jobs for those projects and re-running them. Within minutes, I was back in business.

Lessons Learned

At the end of the day, it was an easy fix, but it served as a valuable reminder:

Always review AI-generated code: AI is a powerful assistant, but it lacks context. It didn’t know I had other files in that bucket I wanted to keep. It just gave me the standard “sync” command.

Enable Bucket Versioning: If I hadn’t had the pipelines to re-deploy, S3 versioning would have been my safety net, allowing me to undelete the files. I ended up enabling this to prevent future heart attacks.

Lifecycle Policies: Configuring lifecycle policies along with versioning helps manage costs while keeping a history of your data. I configured a policy to keep the last 3 versions of objects, giving me a buffer without blowing up my storage costs.

Here is the

lifecycle.jsonconfiguration I used:{ "Rules": [ { "ID": "KeepLast3NoncurrentVersions", "Filter": {}, "Status": "Enabled", "NoncurrentVersionExpiration": { "NoncurrentDays": 1, "NewerNoncurrentVersions": 3 } } ] }You can apply this using the AWS CLI:

aws s3api put-bucket-lifecycle-configuration --bucket my-website-bucket --lifecycle-configuration file://lifecycle.jsonFor more details, check out the official guide on setting lifecycle configuration.

Building this site has been a journey of learning, not just about Hugo and static site generators, but about the importance of resilient infrastructure practices. And hey, at least now I have a good story for the blog!